The Build-vs-Buy Question Nobody Answers Honestly

Every developer who needs to process documents — extract data from PDFs, generate reports, transform images, convert between formats — eventually faces the same decision: build the pipeline yourself or pay someone else to run it.

The internet is full of advice on this, and most of it is dishonest. Vendor blogs claim self-hosting is a maintenance nightmare. Open-source advocates claim managed services are overpriced rent-seeking. Neither side acknowledges the real picture: both approaches work, both have legitimate advantages, and the right choice depends on factors specific to your situation.

This guide lays out those factors. No “our way is always better.” No hand-waving over real tradeoffs. Just a framework for making the decision with clear eyes.

What We Mean by “Document Processing”

Before comparing approaches, it helps to define the scope. “Document processing” in this context covers:

- Extraction — pulling structured data from unstructured documents (invoices, contracts, receipts, forms)

- Generation — producing formatted documents from structured data (PDFs, XLSX, DOCX, EPUB)

- Transformation — converting, resizing, compressing, or manipulating images and documents

- Conversion — turning documents from one format to another (PDF to Markdown, DOCX to PDF, images to text via OCR)

Most real-world pipelines combine two or more of these. An invoice processing workflow extracts data, then generates a summary report. A content pipeline transforms images, then generates a formatted document. The combination matters because it affects how much integration work each approach requires.

The Self-Hosted Stack

The self-hosted approach means running open-source tools on your own infrastructure. The typical stack looks something like:

- OCR/Extraction: Tesseract, Kreuzberg, PaddleOCR, or a local LLM with document understanding

- PDF Generation: Puppeteer/Playwright (headless browser), WeasyPrint, or wkhtmltopdf

- Image Processing: Sharp (Node.js), Pillow (Python), ImageMagick, or libvips

- Spreadsheet Generation: openpyxl (Python), ExcelJS (Node.js), Apache POI (Java)

- Format Conversion: LibreOffice in headless mode, Pandoc, or specialized converters

Each tool is battle-tested and widely used. The open-source ecosystem for document processing is mature. These are not experimental projects — they have years of production use behind them.

Where Self-Hosting Wins

Zero marginal cost. After the initial setup and infrastructure investment, each additional document costs only compute time. If you process millions of documents per month, the per-document cost of self-hosting approaches zero. For high-volume, predictable workloads, the economics are hard to beat.

Full control over every parameter. Need a specific Tesseract language model? A custom ImageMagick policy? A Puppeteer flag that no managed service exposes? Self-hosting gives you access to every configuration option, every flag, every environment variable. No vendor decides what you can and cannot configure.

No vendor dependency. Your pipeline runs on your infrastructure, using open-source tools you can fork if needed. No API key expiration, no pricing changes, no terms-of-service updates, no vendor going out of business. The tools exist independent of any company.

Data never leaves your network. For organizations with strict data residency or security requirements — government agencies, healthcare providers, financial institutions — keeping documents on-premises may be a hard requirement, not a preference. Self-hosting satisfies this by default.

Offline operation. If your pipeline needs to run in air-gapped environments, on edge devices, or in locations with unreliable internet, self-hosting is the only option. A managed API requires network connectivity by definition.

Where Self-Hosting Hurts

Integration is the real cost. The individual tools work. The pain lives in the glue code between them. Tesseract gives you text; you need to parse it into structured fields. Sharp gives you a buffer; you need to handle format detection, error cases, and memory limits. Puppeteer gives you a PDF; you need to manage the Chrome process lifecycle, handle timeouts, and clean up zombie processes.

Each tool has its own API surface, its own error format, its own quirks. The code connecting them — the format conversions, the retry logic, the error handling — is the most fragile part of the system. It’s also the part that nobody writes tests for, because it’s “just glue.”

Edge cases compound. OCR works on clean scans. It struggles with photographed documents, skewed pages, low contrast, handwriting, mixed languages, and unusual fonts. Image processing works on standard formats. It breaks on CMYK TIFFs, animated GIFs with transparency, corrupted headers, and images that claim to be JPEG but are actually PNG.

Each tool has its own category of edge cases. When you chain multiple tools together, the edge cases multiply. A malformed PDF that Tesseract handles gracefully might produce output that breaks your parsing regex, which produces data that crashes your report generator. Debugging these failures across tool boundaries is time-consuming.

Infrastructure overhead is ongoing. Self-hosting is not a one-time setup cost. It’s an ongoing commitment:

- Keeping dependencies updated (security patches, new versions)

- Scaling compute to match load (headless Chrome is memory-hungry)

- Monitoring for failures (zombie processes, disk space, memory leaks)

- Managing Docker images (base image updates, dependency conflicts)

- Handling platform-specific issues (libvips compiled without HEIF support, Tesseract without the language data you need)

For a team with dedicated DevOps capacity, this is manageable. For a small team where the same person writes features and manages infrastructure, it’s a significant ongoing tax.

Time-to-value is measured in days or weeks. Getting Tesseract to extract structured data from a specific document type requires training data, configuration tuning, and custom parsing logic. Getting Puppeteer to generate consistent PDFs requires handling font loading, CSS rendering differences, and page break behavior. Each tool’s learning curve is separate.

A developer who has never used these tools should budget days to weeks for a production-ready pipeline, depending on complexity. That’s not a criticism — it’s a realistic assessment of the work involved.

The Managed API Approach

The managed approach means sending documents to an API and getting results back. Instead of running Tesseract, you call an extraction endpoint. Instead of managing Puppeteer, you call a generation endpoint. The vendor handles infrastructure, scaling, edge cases, and updates.

Where Managed Services Win

Time-to-value in minutes. Sign up, get an API key, make a request. The first working extraction, generation, or transformation happens in the time it takes to read the API docs and write a fetch call. No Docker setup, no dependency installation, no configuration tuning.

For prototyping, this speed difference matters. You can validate whether a document processing pipeline solves your business problem before investing in infrastructure. If the approach doesn’t work, you’ve lost an afternoon, not a sprint.

Edge cases are the vendor’s problem. A managed extraction service has processed millions of documents. The malformed PDF that would take your team two days to debug is a known edge case with a fix already deployed. The CMYK TIFF that crashes your Sharp pipeline is handled transparently.

This is not theoretical. Every document processing vendor maintains a growing library of fixes for document formats, encoding issues, font rendering bugs, and format-specific quirks. When you self-host, you maintain your own library of fixes. When you use a managed service, you inherit the vendor’s.

No infrastructure to maintain. No Docker images to update. No memory limits to tune. No zombie processes to clean up. No scaling decisions to make. The API handles one request the same way it handles ten thousand. The operational burden is genuinely zero on your side.

Consistent API surface across operations. If you need extraction, generation, and transformation, a managed platform gives you one auth mechanism, one error format, one response structure, and one billing system. Self-hosting gives you three different tools with three different interfaces. The integration cost of adding a second operation to a managed pipeline is trivial compared to adding a second self-hosted tool.

Where Managed Services Hurt

Per-request cost at scale. Every API call costs money. At low volumes, the cost is negligible — far less than the engineering time to self-host. At high volumes, the math changes. If you process hundreds of thousands of documents per month, the API costs may exceed the infrastructure and engineering costs of self-hosting.

The crossover point depends on the specific service, the document complexity, and your team’s engineering capacity. But it exists, and honest evaluation requires acknowledging it.

Vendor dependency is real. Your pipeline depends on a third-party service. If the vendor changes pricing, deprecates an endpoint, experiences downtime, or goes out of business, your pipeline is affected. You can mitigate this with abstraction layers and fallback logic, but the dependency exists.

Feature ceiling. A managed API exposes the features the vendor chose to build. If you need a specific configuration option, a custom processing step, or behavior that the API doesn’t support, you’re blocked. You can request it, but you can’t implement it yourself.

Network latency. Every request requires an HTTP round trip. For bulk processing where you’re making thousands of requests, the cumulative latency adds up. Most managed services offer async/webhook-based processing for large workloads, but the latency is inherently higher than local processing.

Data leaves your network. Your documents travel to the vendor’s servers for processing. For many use cases this is fine — the data is not sensitive, or the vendor’s security practices are adequate. For some use cases — classified documents, medical records, certain financial data — it may be a hard constraint.

This is where the vendor’s infrastructure location and data handling practices matter. A service that processes in-memory with zero retention on EU infrastructure is different from one that stores documents on US servers. But even with the strongest guarantees, the data does leave your network during processing.

The Decision Framework

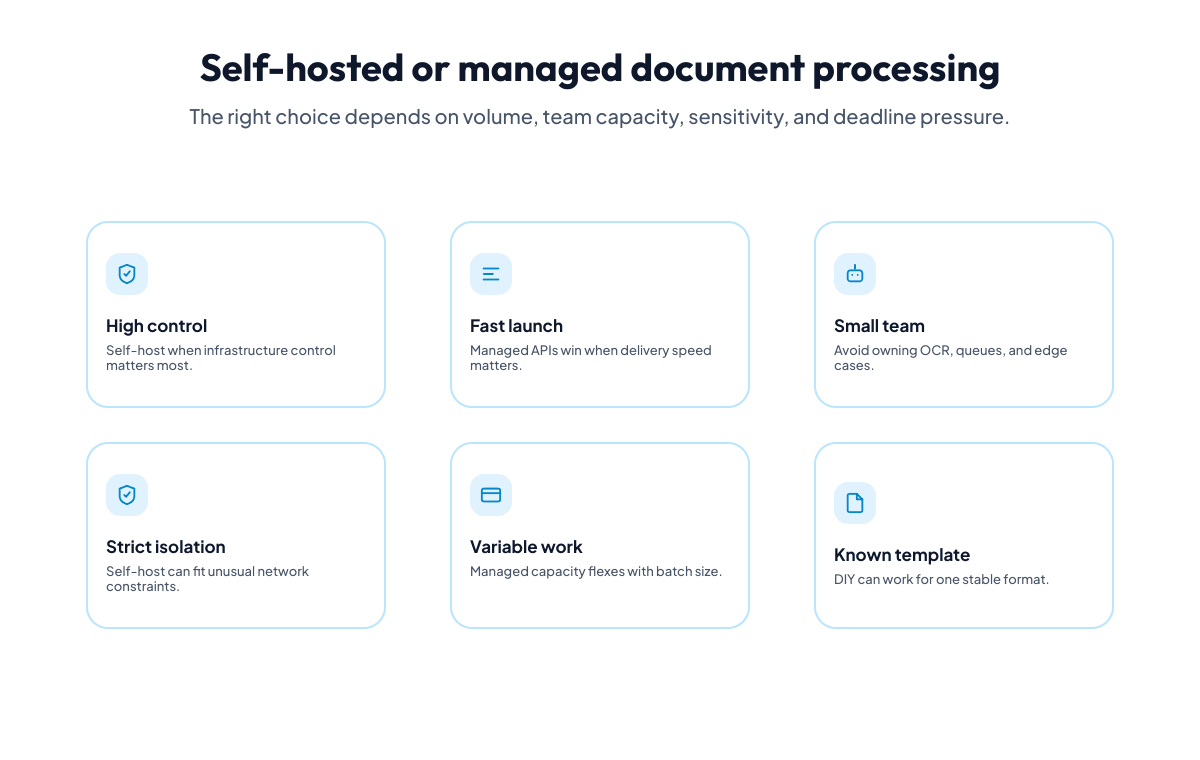

The right choice depends on a handful of concrete factors. Not opinions, not ideology — factors you can evaluate for your specific situation.

Factor 1: Volume and Growth Trajectory

| Monthly volume | Likely winner | Why |

|---|---|---|

| Under 1,000 documents | Managed | Engineering time to self-host far exceeds API cost |

| 1,000 - 50,000 documents | Depends | Evaluate based on other factors below |

| Over 50,000 documents | Evaluate carefully | API costs may exceed infrastructure costs; run the numbers |

Volume alone doesn’t determine the answer. A team processing 100,000 documents with two DevOps engineers has different economics than a solo developer processing 100,000 documents. But volume is the first variable to pin down because it bounds the cost comparison.

Factor 2: Pipeline Complexity

How many different operations does your pipeline chain together?

- Single operation (extraction only, or generation only): Self-hosting is simpler because you manage one tool. The integration tax is low.

- Two operations (extract then generate, or transform then generate): The integration cost doubles. You now manage two tools, two error formats, two deployment configurations, and the glue code between them.

- Three or more operations: Integration complexity grows faster than linearly. Each new tool adds its own failure modes, and the failure modes interact across tool boundaries. A managed platform with a consistent API surface becomes increasingly attractive.

The complexity factor often surprises teams. Adding a second self-hosted tool is not twice the work — it’s the original work plus the integration work, which is often larger than either tool’s setup alone.

Factor 3: Team Composition

| Team | Self-host viability | Managed viability |

|---|---|---|

| Full-stack with DevOps capacity | High | High |

| Small dev team, no dedicated ops | Low-Medium | High |

| Operations/automation builders, limited coding | Very low | High |

| Solo developer | Low (unless domain expert) | High |

Be honest about this one. “We could self-host” is different from “we have the ongoing capacity to maintain a self-hosted pipeline.” The initial setup is a one-time cost. The maintenance — updating dependencies, debugging edge cases, scaling infrastructure — is indefinite.

Factor 4: Data Sensitivity

| Data type | Self-host advantage |

|---|---|

| Public or low-sensitivity data | Minimal — managed is fine |

| Standard business documents (invoices, reports) | Low — managed services with EU hosting and zero retention are adequate for most compliance frameworks |

| Regulated data (healthcare, financial, classified) | Significant — on-premises or air-gapped may be required |

| Government/defense with data sovereignty requirements | Decisive — self-hosting is likely mandatory |

For the middle categories, the question is not “is managed acceptable?” but “does this specific managed service meet our specific compliance requirements?” A vendor that processes in EU data centers with zero data retention and a Data Processing Agreement satisfies most EU regulatory frameworks. A vendor that stores documents on US servers does not.

Factor 5: Time Constraints

If you need a working pipeline this week, self-hosting a multi-tool stack is unlikely. If you have a quarter to build and optimize, self-hosting becomes viable.

This factor is often underweighted. The business need that drives the pipeline doesn’t pause while you configure Tesseract and debug Puppeteer. A managed service that works today and a self-hosted pipeline you build later is a valid strategy — and often the most pragmatic one.

The Decision Matrix

Putting the factors together:

| Scenario | Recommendation |

|---|---|

| Low volume, multi-step pipeline, small team | Managed — the engineering cost of self-hosting far exceeds API cost |

| High volume, single operation, DevOps capacity | Self-host — the per-document savings justify the infrastructure investment |

| High volume, multi-step pipeline, large team | Hybrid — self-host the high-volume single operation, managed for the rest |

| Regulated data, air-gapped environment | Self-host — no alternative |

| Prototype or MVP validation | Managed — validate the approach before investing in infrastructure |

| Operations team automating workflows | Managed — self-hosting requires engineering capacity they don’t have |

The hybrid approach deserves emphasis. It’s not all-or-nothing. A team might self-host image transformation (high volume, single tool, well-understood) while using a managed API for document extraction (complex, edge-case-heavy, lower volume). The approaches are not mutually exclusive.

The Hidden Costs People Forget

Both approaches have costs that don’t appear in the initial comparison.

Self-Hosting Hidden Costs

- Opportunity cost. Every hour spent debugging a Puppeteer timeout or an ImageMagick policy conflict is an hour not spent on your product. For small teams, this is the most expensive cost and the hardest to quantify.

- Recruitment/knowledge cost. When the person who built the pipeline leaves, the next person inherits a custom system with custom workarounds. Documentation helps, but document processing pipelines accumulate tribal knowledge about edge cases.

- Security patching. Open-source tools have CVEs. Tesseract, ImageMagick, Puppeteer, and their dependencies all require security monitoring and updates. This is ongoing and non-optional.

- Scaling surprises. A pipeline that works for 100 documents per day may fail at 10,000. Headless Chrome is memory-hungry. ImageMagick can consume unbounded memory on certain inputs. Scaling requires load testing, resource limits, and capacity planning that wasn’t part of the initial setup.

Managed Service Hidden Costs

- Vendor lock-in friction. Even with clean API abstractions, switching vendors takes engineering time. Request/response formats differ. Error handling differs. Behavior on edge cases differs. The switching cost is not zero.

- Rate limiting at inconvenient times. Most managed services have rate limits. If your processing load is spiky — month-end reporting, seasonal catalog updates — you may hit limits precisely when you need the service most.

- Feature gaps you discover late. You don’t know what the API can’t do until you need it to do it. Discovering a missing feature after building your pipeline around the service is expensive.

- Billing unpredictability. If your volume is unpredictable, your bill is unpredictable. Managed services with per-request pricing pass volume variance directly to your costs.

A Realistic Comparison for Three Common Pipelines

Pipeline 1: Invoice Data Extraction

The task: Extract vendor name, date, line items, and total from PDF invoices. Hundreds per month.

Self-hosted approach: Tesseract for OCR, custom regex or LLM for field extraction, Python script to orchestrate. Setup: 2-3 days. Ongoing maintenance: 2-4 hours/month debugging extraction failures on unusual invoice formats.

Managed approach: Call an extraction API with a schema defining the fields. Setup: 1 hour. Ongoing maintenance: near zero — new invoice formats are the vendor’s problem.

Verdict at this volume: Managed. The engineering time saved in the first month exceeds a year of API costs.

Pipeline 2: Product Image Processing for E-Commerce

The task: Resize, compress, convert format, and optionally remove backgrounds for product images. Tens of thousands per month.

Self-hosted approach: Sharp or libvips behind a small service. Setup: 1 day (Sharp is well-documented). Ongoing maintenance: low — image processing is a well-understood problem with few surprises.

Managed approach: Call a transformation API with an operations array. Setup: 30 minutes. Ongoing: API cost scales linearly with volume.

Verdict at this volume: Depends on volume sensitivity. At 5,000 images/month, managed is simpler and the cost is modest. At 50,000 images/month, the per-image API cost adds up, and Sharp is stable enough that self-hosting is low-risk. At 500,000 images/month, self-hosting almost certainly wins on cost.

Pipeline 3: Monthly Financial Reporting

The task: Extract data from supplier invoices, aggregate by vendor, generate a formatted XLSX with multiple tabs, and produce a summary PDF. Hundreds of documents per month, output is a handful of reports.

Self-hosted approach: Tesseract + custom parsing for extraction, openpyxl for XLSX, Puppeteer or WeasyPrint for PDF. Three tools, three deployment configurations, and significant glue code. Setup: 1-2 weeks. Ongoing maintenance: 4-8 hours/month across the three tools.

Managed approach: Extraction API for parsing, sheet generation API for XLSX, document generation API for PDF. Three API calls using the same auth, same credit pool, same error format. Setup: 2-3 hours. Ongoing: API cost (modest at this volume) and near-zero maintenance.

Verdict: Managed. The three-tool self-hosted pipeline has high integration complexity and significant ongoing maintenance. The managed version uses one vendor with a consistent interface. The cost differential at hundreds of documents per month strongly favors the managed approach.

The Hybrid Strategy

For teams in the “it depends” range, the most pragmatic approach is often hybrid: start managed, observe your actual usage patterns, then selectively self-host the operations where it makes economic sense.

This works because:

- You validate the pipeline before investing in infrastructure. If the business case doesn’t pan out, you haven’t built and maintained a self-hosted stack for nothing.

- You identify which operations are worth self-hosting. Maybe extraction is complex and edge-case-heavy (keep managed), but image resizing is straightforward and high-volume (self-host).

- You reduce risk. A managed service handles the operations where you lack expertise. Self-hosting handles the operations where you have confidence and volume justifies the investment.

The key is treating the decision as reversible and incremental, not as a one-time architectural commitment.

What to Look for in a Managed Service

If you decide managed is the right approach — at least for some of your pipeline — the evaluation criteria matter:

- Data handling. Where are documents processed? Is there data retention? Is processing in-memory or stored to disk? Is a Data Processing Agreement available?

- Infrastructure location. For EU-facing products, EU-hosted processing avoids the GDPR complications of transatlantic data transfers.

- API consistency. If you need multiple operations (extraction + generation + transformation), does the service offer a consistent interface across all of them? Or are you integrating with what amounts to multiple separate products under one brand?

- Pricing model. Per-request, per-page, per-MB, or credit-based? Is the pricing predictable for your workload? Are there hidden fees (bandwidth, storage, overage)?

- Composability. Can the output of one operation feed directly into the input of another without format conversion or middleware? This is the difference between a platform and a collection of APIs.

Making the Call

The build-vs-buy decision for document processing is not about which approach is universally better. It’s about which approach is better for your specific volume, team, timeline, data sensitivity, and pipeline complexity.

Self-hosting wins when you have high volume, simple pipelines, DevOps capacity, and strict data requirements. Managed wins when you have complex multi-step pipelines, limited ops capacity, and value time-to-delivery over per-unit cost optimization.

Most teams fall somewhere in between. That’s where the hybrid approach — start managed, selectively self-host — is the honest recommendation. Not because we’re hedging, but because document processing pipelines evolve. Your volume changes. Your requirements change. Your team changes. The right architecture today might not be the right architecture in a year.

Make the decision that gets you to production fastest with acceptable cost and risk. Revisit it when the facts change.

Where Iteration Layer Fits

We built Iteration Layer as a managed content processing platform with composable APIs — document extraction, document-to-markdown conversion, website extraction, image transformation, image generation, document generation, and sheet generation, all sharing one auth token, one credit pool, and one API style. Everything runs on EU infrastructure with zero data retention.

For teams where managed is the right choice, we think the composability and unified platform matter more than any individual feature. For teams where self-hosting makes sense for part of the pipeline, our APIs can handle the parts where managed wins — the complex, edge-case-heavy operations — while you self-host the rest.

The documentation covers every API. Start the 7-day trial and and decide for yourself whether the tradeoffs work for your situation. We’d rather you make an informed decision than a pressured one.