The Security Questionnaire That Started This

You land a new enterprise client. The SOW is signed. The kickoff call goes well. Then legal sends over a 47-page security questionnaire, and question 14 reads:

“List all sub-processors that will have access to customer data, including the nature of processing, data categories involved, and the jurisdiction of processing infrastructure.”

You look at your stack. The PDF parsing goes through a US-based extraction API. The image resizing runs through a CDN provider with edge nodes in 40 countries. The document generation uses a SaaS tool that stores templates in AWS us-east-1. And the OCR step — you’re not actually sure where that runs. The vendor’s docs don’t say.

This is the moment most technical agencies realize that data security isn’t just an engineering problem. It’s a supply chain problem. Every third-party service that touches client data is a link in the chain, and your client’s legal team is going to pull on every one.

Security work feels like friction when the client is waiting for a demo to become a workflow. But it can also be what makes the workflow approvable. Stanford’s 2026 Enterprise AI Playbook found that security requirements often started as barriers and later enabled projects to handle sensitive data. The agency version is straightforward: the more sensitive the client documents, the more valuable a controlled processing path becomes.

This guide covers the practical security considerations for agencies processing client documents through third-party APIs — not as a theoretical compliance exercise, but as the concrete decisions and trade-offs that determine whether you pass that security questionnaire or spend six weeks in back-and-forth with legal.

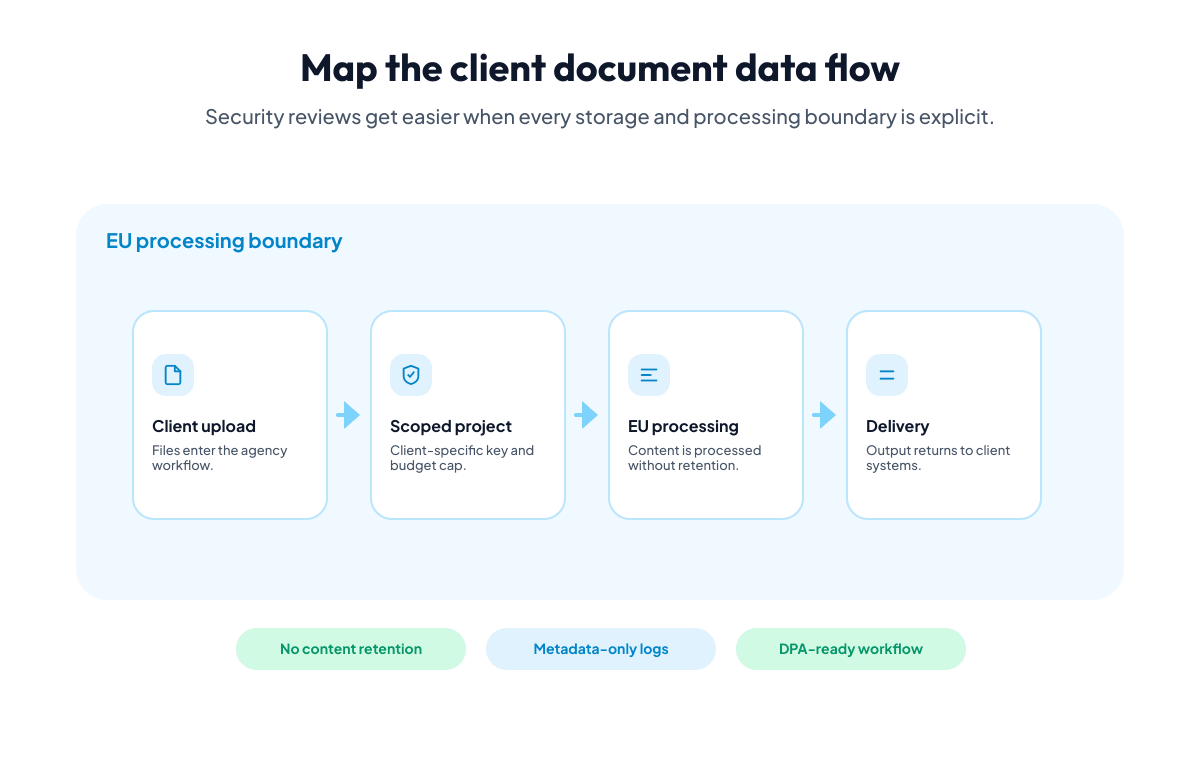

The Data Flow You Need to Map

Before you can answer any security question, you need a clear picture of where client data goes. For a typical agency document workflow, that looks something like this:

- Ingestion. The client uploads a document (contract, invoice, report, manuscript). Where does the file land? Your server? An object store? A managed upload service?

- Processing. The document gets parsed, transformed, converted, or some combination. Which services touch the raw file? Which services see the extracted content?

- Storage. Where do intermediate results live? Where does the final output go? How long are files retained at each step?

- Delivery. How does the processed result get back to the client or into the client’s system? What happens to the file after delivery?

For each step, you need to know:

- Which provider runs the infrastructure

- Which jurisdiction that infrastructure is in

- How long data is retained

- Whether data is encrypted in transit and at rest

- Whether the provider uses the data for anything else (training, analytics, improvement)

Most agencies can answer these questions for their own infrastructure. The gap is almost always in the third-party processing step.

Zero Retention: What It Actually Means

“We don’t store your data” is a claim many API providers make. But the implementation details matter enormously.

What zero retention should mean:

- Files are processed in memory, never written to persistent storage

- No intermediate files on disk during processing

- Raw input files are not accessible after the API response returns

- No file caching between requests

- Log entries contain request metadata (timestamps, operation types, status codes) but not file contents or extracted data

What it often actually means:

- Files are stored temporarily (minutes to hours) in a processing queue

- Intermediate results are cached to speed up repeated operations

- Files are written to disk during processing, then deleted “eventually”

- Log aggregation tools capture request payloads, including base64-encoded files

- Error reporting services capture failed request bodies for debugging

The difference matters. “Temporarily stored for processing” means the file exists on a disk that could be accessed, backed up, or compromised. Even if deletion happens automatically after 5 minutes, during those 5 minutes the file is at rest on infrastructure you don’t control.

Questions to ask your processing provider:

| Question | Good answer | Concerning answer |

|---|---|---|

| Is the file written to disk during processing? | No, processed entirely in memory | “Temporarily written for processing” |

| Are request payloads logged? | Only metadata (endpoint, status, timing) | “We log requests for debugging” |

| Is there any caching of input files? | No file caching between requests | “Files are cached for 1 hour” |

| Can support staff access uploaded files? | No, files are never persisted | “Only with customer permission” |

| Are failed request bodies captured by error tracking? | No, error reports contain only metadata | “We use Sentry/Datadog with full payload capture” |

Iteration Layer’s approach: files are processed in memory and immediately discarded. No files are written to persistent storage. Logs auto-delete after 90 days and contain only request metadata — never file contents or extracted data. Full details are on the security page.

DPA Chains: Your Obligations and Your Vendors’

If you’re an EU-based agency — or if you process data through multi-tenant document pipelines — GDPR compliance creates a chain of data processing agreements that flows from the data subject through the controller (your client), to you (processor), and to your sub-processors (API providers, cloud infrastructure, SaaS tools).

The chain looks like this:

Data Subject (end user)

└── Data Controller (your client)

└── Data Processor (your agency)

├── Sub-processor A (document extraction API)

├── Sub-processor B (image processing API)

├── Sub-processor C (cloud hosting provider)

└── Sub-processor D (email delivery service)Your obligations as a processor:

- You need a DPA with your client (the controller)

- You need DPAs with every sub-processor that touches personal data

- Your client’s DPA with you will typically require that your sub-processor DPAs provide equivalent protections

- You must inform your client of any new sub-processors before they start processing

- You must maintain a record of processing activities under Art. 30 GDPR

The practical problem: many API providers don’t offer DPAs, or only offer them on enterprise plans. Some offer a generic DPA that doesn’t specify the data categories or processing purposes relevant to your use case. And some don’t respond to DPA requests at all.

What a proper sub-processor DPA should include:

| Element | Why it matters |

|---|---|

| Subject matter and purpose of processing | Defines scope — “image transformation” vs. vague “data processing” |

| Categories of personal data | Specifies what’s covered — document contents, metadata, etc. |

| Duration of processing | How long the sub-processor has access |

| Jurisdiction of processing | Where infrastructure is located |

| Technical and organizational measures | Encryption, access controls, incident response |

| Sub-processor’s own sub-processors | The chain continues — cloud providers, CDNs, monitoring tools |

| Audit rights | Your right (and your client’s right) to verify compliance |

| Data deletion obligations | What happens after the processing relationship ends |

| Breach notification timeline | GDPR requires 72 hours to the supervisory authority |

Iteration Layer’s approach: a DPA is available to all customers — no enterprise plan required. It specifies document and image processing as the purpose, covers all personal data contained in uploaded files, and lists the full sub-processor chain. See the Data Processing Agreement for the complete terms.

Sub-Processor Transparency

This is where most vendor evaluations get stuck. You ask “who are your sub-processors?” and get one of three answers:

- A complete list with company names, purposes, and jurisdictions. This is what you need.

- A vague reference to “industry-standard cloud providers.” This tells you nothing.

- No answer. The vendor either doesn’t track their sub-processors or doesn’t want to disclose them.

Sub-processor transparency matters because your DPA with your client typically requires you to list all sub-processors. If your image processing API uses three undisclosed services under the hood — say, an AI model provider for smart cropping, a CDN for temporary file hosting, and a monitoring service that captures request data — you can’t honestly answer your client’s security questionnaire.

A practical sub-processor evaluation checklist:

- Does the vendor publish a sub-processor list?

- Does the list include company names (not just categories)?

- Does the list include the jurisdiction for each sub-processor?

- Does the list include the purpose of each sub-processor?

- Does the vendor notify you before adding new sub-processors?

- Can you object to a new sub-processor?

- Does the vendor have DPAs with all listed sub-processors?

- Are the sub-processor DPAs consistent with your DPA?

The notification requirement is particularly important. GDPR Art. 28(2) requires that processors inform controllers of intended changes to sub-processors, giving the controller the opportunity to object. If your sub-processor (the API provider) adds a new AI service to their pipeline without telling you, and that service processes personal data, you’re in violation of your own DPA with your client — even though you did nothing wrong.

Encryption: In Transit, at Rest, and the Gaps Between

Encryption is table stakes. Every reputable API provider encrypts data in transit (TLS) and at rest (AES-256 or equivalent). But the interesting question is what happens between those two states.

In transit: your files are encrypted over HTTPS between your server and the API. Standard TLS 1.2 or 1.3. Nothing unusual here, and any provider without this is not worth considering.

At rest: if the provider stores files (even temporarily), they should be encrypted on disk. But remember the zero-retention discussion above — if files are never written to disk, encryption at rest is irrelevant because there’s nothing at rest to encrypt. This is a stronger guarantee than encrypted storage.

The gaps:

- In memory during processing. The file exists unencrypted in the processing service’s RAM while operations are running. This is unavoidable — you can’t resize an encrypted image without decrypting it first. The mitigation is processing isolation: each request processed in its own isolated context, with memory cleared after completion.

- Between services. If the provider’s architecture routes your file through multiple internal services (upload service, processing service, delivery service), is the communication between those services encrypted? Internal service-to-service communication is often unencrypted within a VPC. Ask about it.

- In logs and monitoring. As discussed above, if request payloads are logged or captured by error tracking, your file content exists unencrypted in logging infrastructure. This is often the weakest link.

Questions for your provider:

| Area | Question |

|---|---|

| Transit | What TLS version is enforced? |

| At rest | Are files written to disk? If so, what encryption? |

| In memory | Are requests processed in isolation? |

| Internal | Is service-to-service communication encrypted? |

| Logging | Do logs contain file content or extracted data? |

| Backups | Are processing results included in backups? |

Audit Trails: What You Can Prove

When your client asks “can you demonstrate that document X was processed on date Y and deleted immediately after?” you need an audit trail. But audit trails for document processing are tricky because of a tension: you need to prove processing happened without retaining the processed data.

What a good audit trail includes:

- Timestamp of the processing request

- Type of operation (extraction, transformation, generation)

- File metadata (name, size, mime type) — but not file contents

- Processing duration

- Status (success/failure)

- Error details for failures (without file content)

What it should NOT include:

- The actual file content or base64 data

- Extracted field values (these are personal data)

- Rendered output files

How to build your own audit layer on top of an API:

Log the request metadata on your side before and after the API call. You control what goes into your audit log. A minimal audit entry looks like:

{

"timestamp": "2026-04-15T09:23:41Z",

"operation": "document_extraction",

"client_id": "acme-corp",

"project_id": "invoice-processing-q2",

"file_name": "invoice-2026-0412.pdf",

"file_size_in_bytes": 284731,

"status": "success",

"processing_duration_in_ms": 1847,

"provider": "iterationlayer",

"data_jurisdiction": "EU"

}This gives you proof of processing without retaining any document content. Store these logs according to your retention policy (typically aligned with your client’s data retention requirements), and you can answer audit questions years later without ever having kept the original files.

Jurisdiction: Where Your Data Actually Goes

For EU agencies processing EU client data, jurisdiction is not a nice-to-have. It’s a legal requirement under GDPR, and a practical requirement for most enterprise security questionnaires.

The jurisdictional landscape:

| Provider type | Typical jurisdiction | GDPR implication |

|---|---|---|

| US cloud giants (AWS, GCP, Azure) | US by default, EU regions available | Requires Standard Contractual Clauses (SCCs) or adequacy decision. US region is the default for most services. |

| US SaaS APIs | US only | Data crosses the Atlantic. SCCs required. Post-Schrems II, supplementary measures may be needed. |

| EU-hosted APIs | EU | No international transfer. Simplest compliance path. |

| Self-hosted / on-premise | Your infrastructure | Full control, but you bear the full operational burden. |

The practical difference: when you use a US-hosted service, you need SCCs in your DPA, you need to assess whether US surveillance laws (FISA 702, EO 12333) create risks for the specific data categories you’re processing, and you need to document supplementary measures if applicable. This isn’t impossible — thousands of companies do it. But it’s additional legal overhead for every vendor in your stack.

When you use an EU-hosted service, none of that applies. No international transfer means no SCCs, no transfer impact assessment, no supplementary measures. The security questionnaire answer is simple: “All processing occurs within the EU. No personal data leaves EU jurisdiction.”

Iteration Layer runs all processing on EU infrastructure. No data crosses EU borders during processing. Full details are on the security page.

Answering the Security Questionnaire

Let’s go back to that 47-page questionnaire. Here are the questions agencies encounter most frequently, and how to structure your answers when you’re using a third-party processing API.

“List all sub-processors”

How to answer: provide a table with the provider name, purpose, data categories, and jurisdiction. Be specific about what each provider does.

| Sub-processor | Purpose | Data categories | Jurisdiction |

|---|---|---|---|

| Iteration Layer | Document extraction, image transformation, document generation | Document content, image files, metadata | EU |

| [Your hosting provider] | Application hosting | Application data, user accounts | [Jurisdiction] |

| [Your email provider] | Transactional email | Email addresses, notification content | [Jurisdiction] |

“How is data encrypted?”

How to answer:

- In transit: TLS 1.2+ for all API communication

- At rest: not applicable for processing (zero retention — files are not persisted)

- At rest for audit logs: [your log storage encryption details]

“What is the data retention period?”

How to answer: distinguish between your own retention and your sub-processors’ retention.

- Processing API: zero retention. Files processed in memory and immediately discarded.

- Your audit logs: [your retention period, aligned with client requirements]

- Your application data: [your policy]

“Where is data processed geographically?”

How to answer: list each processing step and its jurisdiction.

- Document ingestion: [your server location]

- Document/image processing: EU (Iteration Layer)

- Output storage: [your storage location]

- Delivery: [your delivery infrastructure location]

“How are security incidents handled?”

How to answer: describe your incident response process AND your sub-processor notification chain.

- Your own incident response: [your process, timeline, contacts]

- Sub-processor incidents: [how you’re notified, how you notify your client]

- Regulatory notification: within 72 hours per GDPR Art. 33

“Can we audit your processing infrastructure?”

How to answer: distinguish between what you can audit directly and what is covered by your sub-processor’s DPA.

- Your infrastructure: available for audit per DPA terms

- Sub-processor infrastructure: covered by sub-processor DPA audit clauses, third-party certifications, and published security documentation

For Iteration Layer specifically, the security page and DPA cover the technical and organizational measures in detail.

The Composability Advantage for Security

Here’s something that doesn’t show up on security questionnaires but has a real impact on your security posture: vendor count.

A typical document processing workflow might involve:

- A PDF parsing service (vendor A)

- An OCR service (vendor B)

- An image processing CDN (vendor C)

- A document generation tool (vendor D)

- A spreadsheet export service (vendor E)

That’s five vendors, five DPAs, five sub-processor entries, five sets of security documentation to review, five breach notification chains to manage, and five vendors to re-evaluate every time your client renews.

Iteration Layer covers document extraction, document-to-markdown conversion, website extraction, image transformation, image generation, document generation, and sheet generation in a single platform. One vendor, one DPA, one sub-processor entry, one security review.

This isn’t just an administrative convenience. Fewer vendors means:

- Smaller attack surface. Fewer third parties with access to client data.

- Simpler audit trail. All processing goes through one API with one authentication mechanism.

- Faster security reviews. One vendor to evaluate instead of five.

- Cleaner DPA chain. One sub-processor agreement covers all processing types.

- Consistent data handling. The same zero-retention policy applies to every operation, because it’s the same platform.

When your client’s legal team asks “how many third parties touch our data?” the answer “one processing platform for all document and image operations” is meaningfully different from “five specialized services, each with their own data handling policies.”

A Practical Security Checklist for Agency Projects

Use this checklist when setting up a new client project that involves document processing:

Before the project starts

- Map the complete data flow from client upload to final delivery

- List every third-party service that will touch client data

- Verify that each service has a published DPA or will sign one

- Confirm the jurisdiction of each service’s processing infrastructure

- Review each service’s data retention policy

- Check that each service’s sub-processor list is available and current

- Draft the sub-processor section of your DPA with the client

During implementation

- Implement audit logging for all processing requests (metadata only)

- Verify TLS is enforced for all API communication

- Ensure no file content is captured in application logs

- Test that error handling doesn’t leak document content into error reports

- Implement file validation before sending to processing APIs (size limits, type checks)

- Set up monitoring for processing failures (without logging file content)

Before going live

- Complete the client’s security questionnaire with specific, documented answers

- Review the DPA chain: client DPA, your sub-processor DPAs, consistency between them

- Verify audit log retention aligns with client requirements

- Confirm breach notification process is documented and tested

- Run a test incident response walkthrough

Ongoing

- Monitor sub-processor lists for changes (set calendar reminders to check quarterly)

- Review and renew DPAs before expiration

- Update security documentation when adding new services

- Rotate API keys according to your key management policy

- Audit log retention — delete logs when retention period expires

The Trade-Offs of Self-Hosting

Some agencies choose to self-host document processing to maintain full control over client data. That’s a valid choice, and there are scenarios where it’s the right one:

- Extremely sensitive data (medical records, classified documents) where even transient API processing is outside the client’s risk tolerance

- Air-gapped environments where outbound HTTP calls are not permitted

- Regulatory requirements that mandate on-premise processing for specific data categories

But self-hosting comes with its own security obligations:

- You own the patching cycle for every processing library (ImageMagick CVEs, Tesseract updates, PDF parser vulnerabilities)

- You manage the isolation between processing jobs (one malicious file shouldn’t be able to access another job’s data)

- You handle memory management, resource limits, and OOM prevention

- You build and maintain the audit trail infrastructure

- You ensure encryption at rest for any temporary files created during processing

For most agency workloads, the security trade-off favors an API with strong data handling practices over self-hosted infrastructure with a growing maintenance burden. The API provider specializes in secure processing. Your agency specializes in building products for clients. Each plays to their strength.

Getting Started

If you’re evaluating processing APIs for an agency project, start with the security documentation:

- Security page — covers infrastructure, data handling, encryption, and monitoring

- Data Processing Agreement — the full DPA terms

Both are publicly available without requiring a sales call or NDA. Read them before your client’s legal team asks — it’s easier to answer the security questionnaire when you’ve already reviewed the documentation.

From there, start the 7-day trial, test the APIs with non-sensitive sample data, and evaluate whether the data handling meets your client’s requirements. The SDKs are available for TypeScript, Python, and Go — same APIs, same security model, same zero-retention guarantee regardless of which language you use.

The security questionnaire doesn’t have to be the bottleneck. With the right vendor choices and a clear data flow map, it becomes a documentation exercise instead of a scramble.