The Compliance Liability Hiding in Your Document Pipeline

Your client’s invoice pipeline processes supplier PDFs through an extraction API, transforms product images for their webshop, and generates monthly reports as downloadable documents. The data flowing through that pipeline includes names, addresses, tax IDs, bank account numbers, and purchase histories of EU residents.

Now ask: where is that data being processed?

If the answer is “somewhere in AWS us-east-1” or “whatever region Cloudinary defaults to,” you have a compliance problem. Not a theoretical one. A practical one that EU data protection authorities are increasingly willing to enforce with fines.

For agencies building document processing workflows for EU clients, this is not a peripheral concern. It is the foundation on which every architecture decision rests. Get it wrong, and the liability sits with both the agency (as processor) and the client (as controller). Get it right, and GDPR compliance becomes a competitive advantage — something you can pitch to clients rather than something you scramble to explain away during a compliance review.

That is not only a legal argument. Stanford’s 2026 Enterprise AI Playbook found that security requirements were not pure project killers in the successful deployments it studied. When handled well, those requirements later enabled sensitive-data workflows that would otherwise have been off-limits. The practical version for EU agencies is covered in security enables sensitive AI workflows.

This guide covers the legal landscape, architectural patterns, vendor evaluation criteria, and practical decision frameworks for building document processing pipelines that are genuinely GDPR-compliant — not just GDPR-adjacent. The same review should cover every processor in the workflow: document extraction, document-to-markdown conversion, website extraction, image transformation, document generation, and spreadsheet generation.

The Legal Landscape: Why US-Hosted Processing Is a Risk

Schrems II and the Death of Automatic Adequacy

In July 2020, the Court of Justice of the European Union invalidated the EU-US Privacy Shield in what is known as the Schrems II decision (Case C-311/18). The court found that US surveillance laws — specifically Section 702 of the Foreign Intelligence Surveillance Act (FISA) and Executive Order 12333 — do not provide protections equivalent to EU fundamental rights.

The immediate consequence: transferring personal data to the US requires additional safeguards beyond Standard Contractual Clauses (SCCs). The court did not say SCCs are invalid, but it said they are not sufficient on their own. Organizations must conduct a Transfer Impact Assessment (TIA) for each transfer and implement supplementary measures where US law conflicts with EU data protection standards.

The EU-US Data Privacy Framework: Not a Free Pass

The EU-US Data Privacy Framework (DPF), adopted in July 2023, partially addresses this gap. US companies that self-certify under the DPF can receive EU personal data without additional safeguards. But there are caveats that matter for document processing:

- Self-certification is voluntary. Not all US cloud providers participate, and participation can be withdrawn.

- The DPF is fragile. The same activist who brought down Safe Harbor and Privacy Shield (Max Schrems) has already signaled challenges. Many legal experts expect the DPF to face judicial review. Building critical infrastructure on a legal mechanism that may be invalidated is a strategic risk.

- The CLOUD Act overrides geography. The US Clarifying Lawful Overseas Use of Data (CLOUD) Act of 2018 compels US-based companies to produce data stored anywhere in the world when served with a valid warrant. This applies regardless of where the data is physically stored. A US cloud provider running servers in Frankfurt is still subject to CLOUD Act disclosure obligations.

What This Means for Document Processing

Document processing pipelines handle exactly the kind of data that triggers GDPR obligations: invoices contain personal names and financial data. Contracts contain signatures and identification numbers. HR documents contain employment history and compensation details.

When you send a client’s invoice PDF to a US-hosted extraction API, you are transferring that personal data to a US jurisdiction — even if the API server happens to be in an EU region. If the API provider is a US-incorporated company, the CLOUD Act applies.

This creates a layered risk:

- Transfer risk. The data crosses jurisdictional boundaries, even if it only crosses them logically (US company, EU server) rather than physically.

- Access risk. US law enforcement can compel the provider to produce the data, and gag orders can prevent the provider from notifying you.

- Retention risk. Many document processing APIs cache files for performance, store intermediate results, or retain logs that include document content. Even “temporary” storage creates a window of exposure.

For an agency building pipelines for EU clients, each of these risks is a liability that flows downstream to your client — and upstream to you as the entity that chose the vendor.

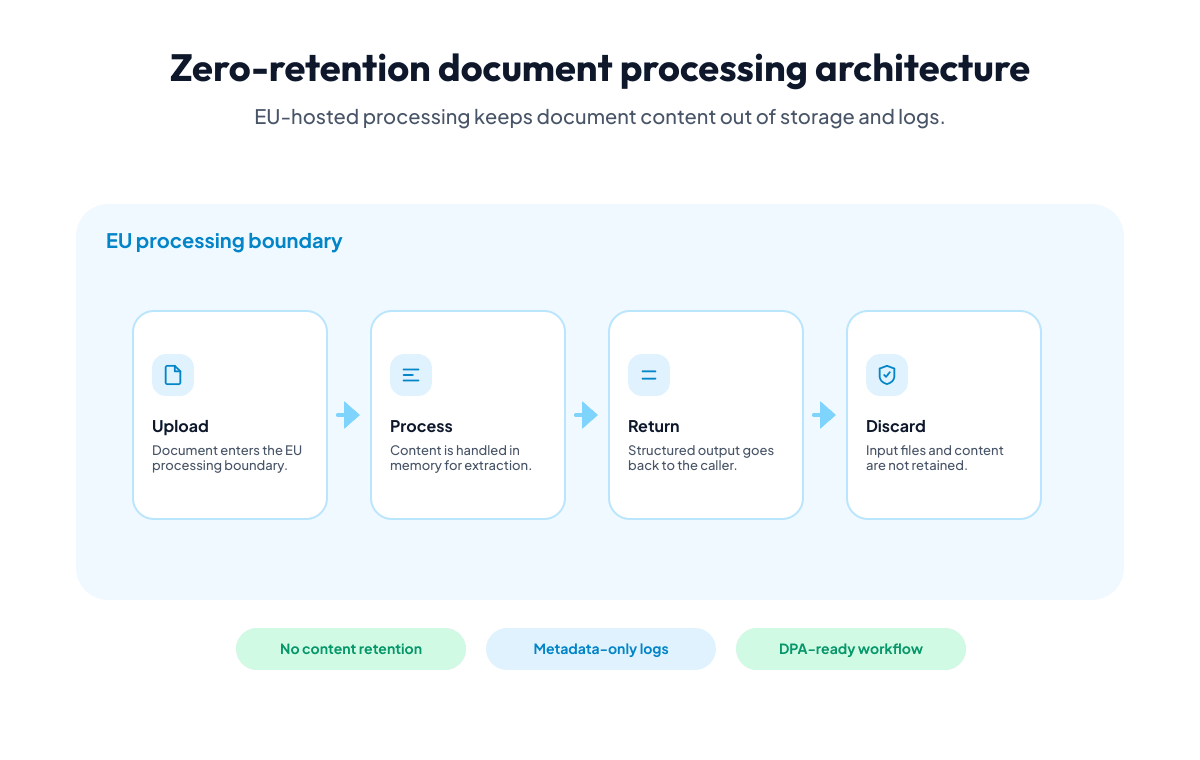

Zero-Retention Processing Architecture

The cleanest way to minimize GDPR exposure in document processing is to ensure that personal data never persists at the processing layer. This is the zero-retention pattern.

How Zero-Retention Works

In a zero-retention architecture, the document processing service:

- Receives the file (via upload or URL reference)

- Processes it entirely in memory

- Returns the result (extracted data, transformed image, generated document)

- Discards the input file and all intermediate data immediately after the response is sent

No file is written to disk. No result is cached. No intermediate state is stored. The processing service is stateless with respect to document content.

Why This Matters for GDPR

Under GDPR Article 5(1)(e), personal data must be “kept in a form which permits identification of data subjects for no longer than is necessary for the purposes for which the personal data are processed” (storage limitation). Zero-retention is the strongest possible implementation of this principle — the data exists only for the duration of the API call.

This dramatically simplifies your Data Protection Impact Assessment (DPIA). The processing service handles personal data, but it does not store it. The window of exposure is measured in seconds, not hours or days.

Contrast this with services that cache uploaded files for 24 hours “for debugging purposes” or retain extracted results “to improve model accuracy.” Each retention window is a period during which the data could be accessed, breached, or compelled by law enforcement. Each one requires documentation, justification, and security measures in your DPIA.

Operational Logging Without Content Retention

Zero-retention does not mean zero observability. A well-designed processing service logs operational metadata — request timestamps, response times, error codes, credit consumption — without logging document content.

The distinction matters: operational logs help you debug failures and monitor performance. Content logs create GDPR liabilities. Your vendor’s logging architecture should separate these concerns by design, not by policy.

Look for vendors where:

- Operational logs auto-delete after a defined retention period (90 days is common and defensible)

- Log entries never contain document content, extracted field values, or image data

- The retention period is documented in the vendor’s privacy policy, not just mentioned in a sales call

DPA Requirements for Document Processing Vendors

A Data Processing Agreement (DPA) is required under GDPR Article 28 whenever a data controller (your client) engages a data processor (you, as the agency, or the API vendor) to process personal data on their behalf.

The DPA Chain

In a typical agency setup, the DPA chain looks like this:

Client (Controller)

└── Agency (Processor) — DPA with client

└── API Vendor (Sub-processor) — DPA with agencyYour client needs a DPA with your agency. Your agency needs a DPA with each API vendor that processes personal data from client documents. The client should be informed about sub-processors in the DPA, and many enterprise clients require the right to object to new sub-processors.

What to Look for in a Vendor DPA

Not all DPAs are created equal. Many are boilerplate documents designed to satisfy a checkbox rather than provide genuine compliance assurance. When evaluating a document processing vendor’s DPA, check for:

Processing location specificity. The DPA should state exactly where data is processed — not “EU/EEA” but the specific country and, ideally, the specific data center provider. “Germany (Hetzner)” is more useful than “European Economic Area.”

Sub-processor transparency. The DPA should list all sub-processors that handle personal data, their locations, and what data they access. A document processing service that routes files through a US-based OCR provider has introduced a transatlantic transfer, even if the primary API is EU-hosted processing.

Data deletion commitments. The DPA should specify what happens to data after processing. Zero-retention should be explicitly stated, not implied. Look for language like “input files are processed in memory and deleted immediately upon completion of the request.”

Breach notification timelines. GDPR Article 33 requires notification within 72 hours. The DPA should commit the vendor to notifying you “without undue delay” — ideally with a specific hour target — to give you enough time to notify your client within the 72-hour window.

Audit rights. Article 28(3)(h) requires the processor to make available all information necessary to demonstrate compliance. A good DPA includes provisions for audits or third-party certifications.

Technical and organizational measures (TOMs). The DPA should reference specific security measures — encryption in transit, encryption at rest (if applicable), access controls, employee training — not just “appropriate technical and organizational measures.”

Practical Tip: Build a DPA Evaluation Checklist

Before evaluating any vendor, build a checklist based on Article 28(3) requirements. Score each vendor against it. The checklist should cover:

- Processing purpose and scope

- Data types processed

- Processing location (specific country and provider)

- Sub-processor list and locations

- Data retention / deletion policy

- Breach notification timeline

- Audit rights and procedures

- Technical security measures

- Data subject rights assistance

- Return/deletion obligations at contract end

This checklist becomes a reusable asset across client engagements. When a client asks “how did you evaluate this vendor?”, you hand them the scored checklist instead of scrambling to reconstruct your reasoning.

Architecture Patterns for Compliant Document Processing

Pattern 1: Direct EU-Hosted API

The simplest pattern. Your application sends documents directly to an EU-hosted processing API and receives results back.

Client Application (EU)

→ EU-Hosted Processing API (EU)

→ Response back to applicationWhen to use: Most document processing workflows. Invoice extraction, image transformation, report generation, document conversion.

GDPR implications: Minimal. Data stays within the EU throughout the request lifecycle. If the vendor operates zero-retention, no personal data persists outside your application.

Here is what this looks like with Iteration Layer — the document is sent to an EU-hosted API, processed in memory, and the structured result is returned immediately:

curl -X POST https://api.iterationlayer.com/document-extraction/v1/extract \

-H "Authorization: Bearer il_your_api_key" \

-H "Content-Type: application/json" \

-d '{

"files": [

{

"type": "url",

"name": "invoice.pdf",

"url": "https://your-eu-storage.example.com/invoices/INV-2026-001.pdf"

}

],

"schema": {

"fields": [

{

"name": "vendor_name",

"description": "Name of the invoicing company",

"type": "TEXT"

},

{

"name": "invoice_total",

"description": "Total amount including VAT",

"type": "CURRENCY_AMOUNT"

},

{

"name": "iban",

"description": "Bank account IBAN for payment",

"type": "IBAN"

}

]

}

}'const client = new IterationLayer({ apiKey: "il_your_api_key" });

const result = await client.extractDocument({

files: [

{

type: "url",

name: "invoice.pdf",

url: "https://your-eu-storage.example.com/invoices/INV-2026-001.pdf",

},

],

schema: {

fields: [

{

name: "vendor_name",

description: "Name of the invoicing company",

type: "TEXT",

},

{

name: "invoice_total",

description: "Total amount including VAT",

type: "CURRENCY_AMOUNT",

},

{

name: "iban",

description: "Bank account IBAN for payment",

type: "IBAN",

},

],

},

});client = IterationLayer(api_key="il_your_api_key")

result = client.extract_document(

files=[

{

"type": "url",

"name": "invoice.pdf",

"url": "https://your-eu-storage.example.com/invoices/INV-2026-001.pdf",

}

],

schema={

"fields": [

{

"name": "vendor_name",

"description": "Name of the invoicing company",

"type": "TEXT",

},

{

"name": "invoice_total",

"description": "Total amount including VAT",

"type": "CURRENCY_AMOUNT",

},

{

"name": "iban",

"description": "Bank account IBAN for payment",

"type": "IBAN",

},

]

},

)client := iterationlayer.NewClient("il_your_api_key")

result, err := client.ExtractDocument(iterationlayer.ExtractDocumentRequest{

Files: []iterationlayer.FileInput{

iterationlayer.NewFileFromURL("invoice.pdf",

"https://your-eu-storage.example.com/invoices/INV-2026-001.pdf"),

},

Schema: iterationlayer.ExtractionSchema{

Fields: []iterationlayer.FieldConfig{

iterationlayer.TextFieldConfig{

Name: "vendor_name",

Description: "Name of the invoicing company",

},

iterationlayer.CurrencyAmountFieldConfig{

Name: "invoice_total",

Description: "Total amount including VAT",

},

iterationlayer.IBANFieldConfig{

Name: "iban",

Description: "Bank account IBAN for payment",

},

},

},

})The invoice never leaves the EU. The extracted data (vendor name, total, IBAN) returns to your application. No intermediate storage, no cross-border transfer.

Pattern 2: Webhook-Based Async Processing

For large documents or batch processing, the synchronous pattern may time out. The async pattern uses webhooks: you submit the document, receive an acknowledgment, and get the result delivered to your webhook endpoint when processing completes.

Client Application (EU)

→ EU-Hosted Processing API (EU) [accepts, starts processing]

→ Immediate acknowledgment to application

...

→ EU-Hosted Processing API (EU) → Webhook callback to application (EU)GDPR consideration: The webhook URL must be an EU-hosted endpoint. If your webhook handler runs on a US cloud provider, you have reintroduced a transatlantic transfer at the result delivery step. Ensure your entire callback chain stays within the EU.

Pattern 3: Pre-Signed URL with EU Storage

When documents are already in EU-hosted cloud storage, you can pass a pre-signed URL directly to the processing API instead of uploading the file. This avoids an extra copy of the document in transit.

EU Object Storage (source)

→ Pre-signed URL → EU-Hosted Processing API (EU)

→ Processing API fetches file from EU storage

→ Result returned to applicationGDPR advantage: The document never transits through your application server. It goes directly from EU storage to EU processing. This reduces the number of systems that handle the personal data, which simplifies your Article 30 records of processing.

Pattern 4: On-Premises Pre-Processing with EU API Post-Processing

Some clients have strict requirements about certain document types never leaving their internal network. In these cases, you can split the pipeline: handle sensitive pre-processing on-premises and send only de-identified or aggregated data to the cloud API for further processing.

On-Premises System

→ Extract sensitive fields locally

→ Send non-sensitive data to EU-Hosted API for report generationWhen to use: Government clients, healthcare, financial services with strict data classification policies. The tradeoff is increased on-premises complexity.

Decision Framework: EU-Hosted vs US-Hosted Services

Not every document processing task involves personal data. A pipeline that resizes product stock photos has different compliance requirements than one that extracts patient names from medical records. Here is a practical decision framework.

Step 1: Data Classification

Before choosing a vendor, classify the data flowing through the pipeline:

| Category | Examples | GDPR Applicability |

|---|---|---|

| No personal data | Stock images, public domain documents, synthetic test data | Not subject to GDPR transfer restrictions |

| Pseudonymized data | Documents with names replaced by IDs (but IDs can be re-linked) | Still personal data under GDPR |

| Personal data | Invoices, contracts, HR documents, customer correspondence | Full GDPR transfer restrictions apply |

| Special category data | Health records, biometric data, political opinions | Stricter protections under Article 9 |

If the pipeline handles personal data (categories 2-4), EU-hosted processing is the lowest-risk option.

Step 2: Transfer Impact Assessment

If you are considering a US-hosted service for personal data processing, you must conduct a Transfer Impact Assessment (TIA). The TIA evaluates:

- Legal framework of the destination country. For the US: FISA Section 702, CLOUD Act, Executive Order 12333.

- Supplementary measures. Technical measures (encryption where only you hold the key), contractual measures (SCCs with additional clauses), organizational measures (limiting data access).

- Practical effectiveness. Can the supplementary measures actually prevent access by US authorities? If the vendor must provide data in cleartext to process it (which is the case for document extraction), encryption alone is not a sufficient supplementary measure.

For document processing specifically, supplementary measures are hard to implement effectively. The vendor needs to read the document content to extract data from it. You cannot encrypt the content in a way that allows processing but prevents government access — the vendor must have access to the cleartext during processing.

This is why zero-retention EU-hosted processing is structurally superior for GDPR compliance: the data is processed in the EU, by an EU-incorporated entity, with no retention. There is no data to compel, no transfer to assess, and no supplementary measures to evaluate.

Step 3: Vendor Evaluation Matrix

| Criterion | EU-Hosted, EU Company | EU-Hosted, US Company | US-Hosted, US Company |

|---|---|---|---|

| CLOUD Act exposure | None | Yes (company is US-incorporated) | Yes |

| Transfer mechanism needed | None | DPF certification or SCCs + TIA | DPF certification or SCCs + TIA |

| Schrems III risk | None | High (DPF may be invalidated) | High |

| DPA complexity | Standard Article 28 DPA | Standard DPA + CLOUD Act provisions | Standard DPA + transfer clauses |

| Client confidence | High | Medium (requires explanation) | Low (requires extensive justification) |

Step 4: The “Explain It to the DPO” Test

Before committing to a vendor, imagine explaining the data flow to your client’s Data Protection Officer. Can you describe, in plain language:

- Where the data goes?

- Who can access it?

- How long it persists?

- What happens if law enforcement requests it?

If the answer to any of these requires caveats, exceptions, or “in theory” qualifications, consider whether a simpler architecture would serve you better.

For EU-hosted, zero-retention processing by an EU company, the answers are straightforward: the data stays in the EU, only the processing service accesses it during the API call, it persists for zero seconds after the response, and EU law enforcement procedures apply (which include judicial oversight and GDPR protections).

Building GDPR Compliance into Your Agency Workflow

For New Client Engagements

- Classify data early. In the project scoping phase, identify what personal data will flow through the document pipeline. This determines vendor requirements.

- Include vendor compliance in your proposal. When you tell a client “we use EU-hosted processing with zero data retention,” that is a selling point. Put it in the proposal, not just the DPA.

- Set up the DPA chain before processing starts. Sign the DPA with your API vendor, then include the vendor as a sub-processor in your DPA with the client.

For Existing Pipelines

- Audit current data flows. Map every external service that touches document content. Check where each one processes data and what their retention policies are.

- Identify US-hosted services handling personal data. These are your highest-priority migration targets.

- Migrate incrementally. You do not need to replace everything at once. Start with the pipelines that handle the most sensitive data.

For Client Compliance Reviews

Agencies working with EU mid-market clients will increasingly face compliance reviews. Having clear answers to these questions makes the review faster and builds trust:

- “Where are documents processed?” — Within the EU, with specific location documented.

- “Is there any transatlantic data transfer?” — No. Processing, storage, and logging all happen within the EU.

- “What is the data retention period?” — Zero. Files are processed in memory and discarded immediately.

- “Do you have a DPA?” — Yes, signed and available for review.

- “Who are the sub-processors?” — Listed in the DPA with locations and processing purposes.

Iteration Layer is built on this architecture. All processing runs on EU infrastructure with zero data retention. A DPA is available to all customers. See our Security page for full infrastructure details.

The Competitive Advantage of EU Compliance

For agencies serving EU clients, GDPR compliance is not just a cost of doing business. It is a differentiator.

When a competing agency tells a prospect “we use AWS Textract for document extraction,” that prospect’s DPO will ask about transatlantic data transfers, CLOUD Act exposure, and supplementary measures. The competing agency will spend hours preparing a Transfer Impact Assessment that may or may not satisfy the DPO.

When you tell the same prospect “we use EU-hosted processing with zero data retention — here is the DPA and the security documentation,” the conversation shifts from risk mitigation to capability discussion. You are not defending your architecture choices. You are demonstrating that compliance was baked in from the start.

This advantage compounds across client engagements. The DPA chain, the vendor evaluation, the compliance documentation — these are reusable assets. The first client engagement requires the most work. Every subsequent engagement reuses the same infrastructure, the same DPA, and the same compliance narrative.

For agencies positioning EU data sovereignty as part of their value proposition, the infrastructure backing that claim needs to be credible. An EU-hosted processing platform with zero retention, transparent sub-processors, and a proper DPA is the foundation.

Further Reading

- CJEU Schrems II Decision (Case C-311/18) — The full court ruling that invalidated Privacy Shield

- European Data Protection Board: Recommendations on supplementary measures

- US CLOUD Act full text

- EU-US Data Privacy Framework adequacy decision

- Iteration Layer Security page — Infrastructure details, encryption, data handling practices

- Iteration Layer Data Processing Agreement — Available for all customers