Two Regulations, One Pipeline

If you process documents with AI in the EU — extracting invoice data, parsing contracts, classifying incoming mail — you’re operating under two regulatory frameworks simultaneously. GDPR compliance has been in force since 2018 and governs how you handle personal data. The EU AI Act, which started applying in stages from 2025, governs how you deploy AI systems.

Most developers know GDPR exists. Fewer have thought about how the AI Act applies to their document processing pipeline. And almost nobody has a clear picture of what both regulations mean together for a specific technical decision: which API do you call, where does the data go, and how long does it stay there. That question applies to every processor in the chain: Document Extraction, Document to Markdown, Website Extraction, image APIs, document generation, and spreadsheet generation.

This isn’t a legal guide. It’s a practical overview for technical decision-makers who need to understand the regulatory landscape well enough to make informed architecture choices.

GDPR and Document Processing

GDPR applies whenever you process personal data of EU residents. Documents are full of personal data — names, addresses, IBANs, email addresses, salary figures, medical information. Every invoice, every contract, every HR document that passes through your extraction pipeline is a GDPR-relevant processing operation.

Three GDPR principles matter most for document processing:

Data minimization. You should only process the data you actually need. If you’re extracting invoice totals and vendor names, your processing service shouldn’t retain the full document text, the embedded images, or any other data beyond what’s necessary for the extraction. A service that stores your documents “for quality improvement” or “model training” is processing more data than your stated purpose requires.

Storage limitation. Personal data shouldn’t be kept longer than necessary for the purpose it was collected. If you send a document to an API for extraction, and that API retains the document on its servers after returning the result, you have a storage limitation problem. You now need to account for that retention in your privacy policy, your data processing records, and your DPA with the vendor.

Transfer restrictions. Transferring personal data outside the EU requires a legal basis — an adequacy decision, Standard Contractual Clauses, or Binding Corporate Rules. If your extraction API is hosted in the US, every document you send is a transatlantic transfer. That transfer needs a legal mechanism, and the legal landscape for EU-US transfers has been unstable (Safe Harbor invalidated in 2015, Privacy Shield invalidated in 2020, the current EU-US Data Privacy Framework in place since 2023).

The practical takeaway: a document processing service that runs in the EU, processes in memory, retains nothing, and returns only the extracted fields gives you the cleanest GDPR posture. No retention to account for, no transfers to justify, no excess data to minimize.

The EU AI Act and Document Processing

The AI Act classifies AI systems by risk level. Most automated document processing falls into the “limited risk” or “minimal risk” categories, not “high risk” — but the classification depends on what you’re processing and what decisions the output feeds into.

When document processing is minimal risk. An AI system that extracts invoice numbers, totals, and dates from supplier invoices for bookkeeping is a minimal-risk system. It’s automating a clerical task. The AI Act imposes no specific obligations beyond general transparency requirements.

When document processing is limited risk. If your extraction system interacts with people — for example, an AI chatbot that asks users to upload documents and then processes them — the AI Act requires transparency. The user needs to know they’re interacting with an AI system.

When document processing could be high risk. If extracted data feeds into decisions about people — credit assessments, employment decisions, insurance eligibility, access to essential services — the AI system may be classified as high risk. High-risk systems have obligations around risk management, data governance, technical documentation, human oversight, and accuracy.

The important nuance: the classification applies to the AI system as a whole, not to individual API calls. If you’re using an extraction API as one component in a larger system, the risk classification depends on the system’s purpose, not on the extraction step alone.

What this means in practice. For most document processing pipelines — invoice processing, contract parsing, catalog extraction, report generation — the AI Act obligations are minimal. But if you’re building a system where AI-extracted data influences decisions about individuals, you need to understand where your pipeline falls on the risk spectrum and what obligations apply.

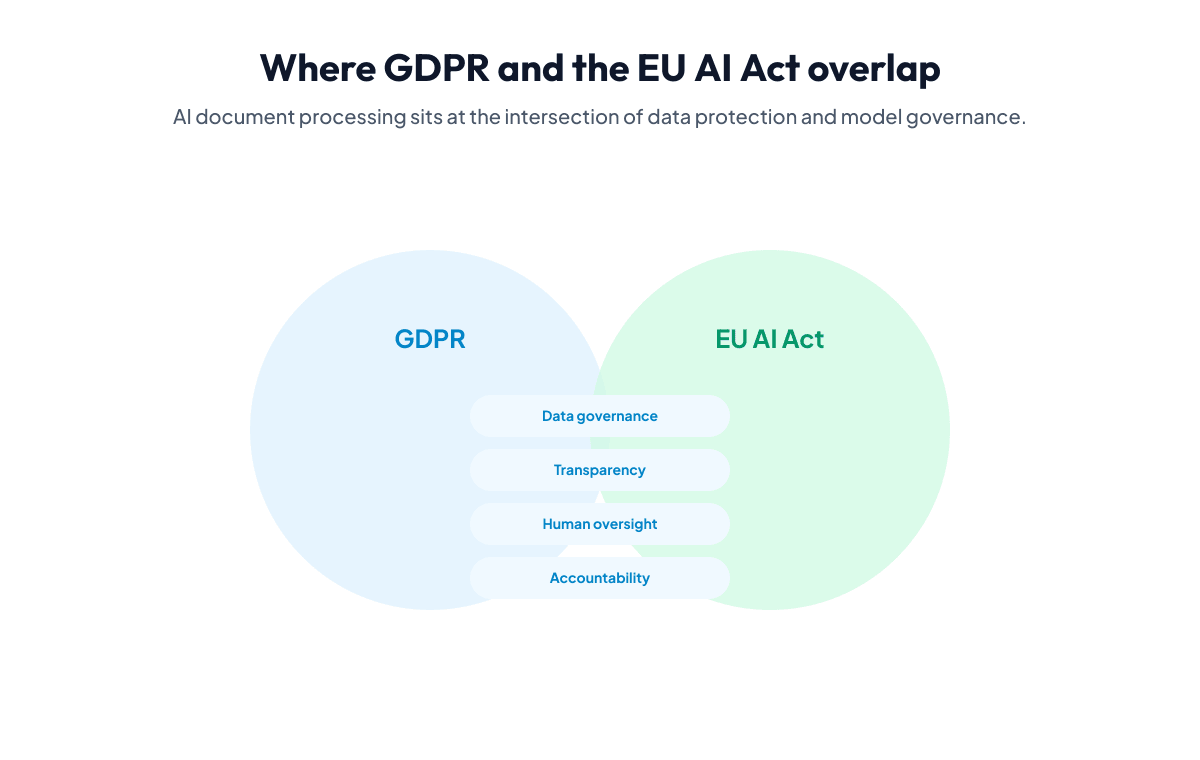

Where the Two Regulations Overlap

GDPR and the AI Act aren’t separate concerns — they interact. A few areas where they converge for document processing:

Transparency. GDPR requires you to inform data subjects about how their data is processed. The AI Act requires transparency about AI involvement. If you’re processing documents that contain personal data using AI extraction, both regulations point in the same direction: be clear about what you’re doing, what data you’re processing, and what role AI plays.

Data governance. The AI Act’s high-risk requirements include data governance obligations — ensuring training data is relevant, representative, and free from bias. GDPR’s data quality principle requires that personal data be accurate and kept up to date. If you’re training or fine-tuning extraction models on documents containing personal data, both frameworks apply.

Accountability. Both regulations emphasize documentation and accountability. GDPR requires records of processing activities. The AI Act requires technical documentation for high-risk systems. For document processing pipelines, this means maintaining clear records of what data flows through your system, which AI services process it, and where those services are hosted.

What Zero-Retention EU-Hosted Processing Solves

An architecture decision can address many of these concerns at once. Iteration Layer processes all documents on EU infrastructure, in memory, with zero retention. Here’s what that means for each regulatory concern:

GDPR data minimization: The API receives your document, extracts the requested fields, and returns the result. The document is not stored, not cached, not logged. The only data that persists is the structured extraction result — exactly the fields you asked for.

GDPR storage limitation: There is nothing to delete because nothing is stored. Your retention obligation is limited to whatever you do with the extraction result on your end. The processing service itself has no retention period to account for.

GDPR transfer restrictions: Data never leaves the EU. No transatlantic transfers, no SCCs, no adequacy decisions to evaluate. The processing happens entirely within the EU, and the result is returned to you directly.

AI Act transparency: The extraction API returns confidence scores for every field, so you can see how certain the system is about each extracted value. This supports human oversight — you can route low-confidence results to manual review rather than blindly trusting AI output.

AI Act documentation: The API’s behavior is deterministic for a given document and schema. Same input, same schema, same result. This makes documentation and auditability straightforward — you can reproduce and verify any extraction.

What This Doesn’t Solve

No single infrastructure decision makes you “compliant.” Compliance is about the entire system, not one component.

If you process documents containing personal data, you still need:

- A lawful basis for processing under GDPR (contract, legitimate interest, consent, etc.)

- Privacy notices that accurately describe your processing activities

- A record of processing activities that includes your document processing pipeline

- If you’re in high-risk territory under the AI Act, the full set of high-risk obligations (risk management, human oversight, accuracy monitoring)

EU-hosted processing, zero-retention processing simplifies the infrastructure layer of compliance. It doesn’t replace the legal and organizational layers. Work with legal counsel for your specific situation.

Practical Takeaways

For developers and technical decision-makers evaluating document processing services for EU use cases:

- Check where data is processed. “GDPR compliant” on a marketing page doesn’t mean the data stays in the EU. Check the actual infrastructure — which cloud provider, which region, which data center.

- Check the retention policy. Does the service store your documents after processing? For how long? For what purpose? “Processed and immediately deleted” is the cleanest answer. “Retained for 30 days for service improvement” means you need to account for that in your DPIA.

- Check the DPA. A real DPA specifies the processing location, the data categories, the retention period, and the subprocessors. If a vendor can’t provide one, or if the DPA is vague about processing locations, that’s a red flag.

- Assess your AI Act risk level. If your extraction output feeds into automated decisions about people, you may have high-risk obligations. If it feeds into bookkeeping or operational workflows, you’re likely in minimal-risk territory.

- Build for the strictest reasonable interpretation. Regulations get stricter over time, not looser. An architecture that works under strict interpretation today won’t need to be rebuilt when enforcement tightens.

Iteration Layer’s security page details the full infrastructure setup, and a Data Processing Agreement is available to all customers. The APIs are free to try — create an account and test the extraction pipeline with your own documents to verify the architecture before committing.