The 3am Alert

You shipped the image pipeline months ago. It worked in staging. It worked the first week in production. Then one morning at 3am, your phone buzzes. The container is dead. Memory usage spiked to 4 GB, Kubernetes killed the pod, and the queue backed up to 12,000 unprocessed images.

You SSH in, restart the service, and go back to bed. Two days later, it happens again. Different trigger this time — a user uploaded a 14,000 x 14,000 pixel TIFF and your resize step tried to hold the entire decoded bitmap in memory. That’s roughly 784 MB for a single image, before your pipeline even starts working on it.

This is the life cycle of every self-hosted image pipeline. It works until it doesn’t. And when it breaks, it breaks at the worst possible time, in ways you didn’t anticipate, because image processing is full of edge cases that only surface under real-world load with real-world files.

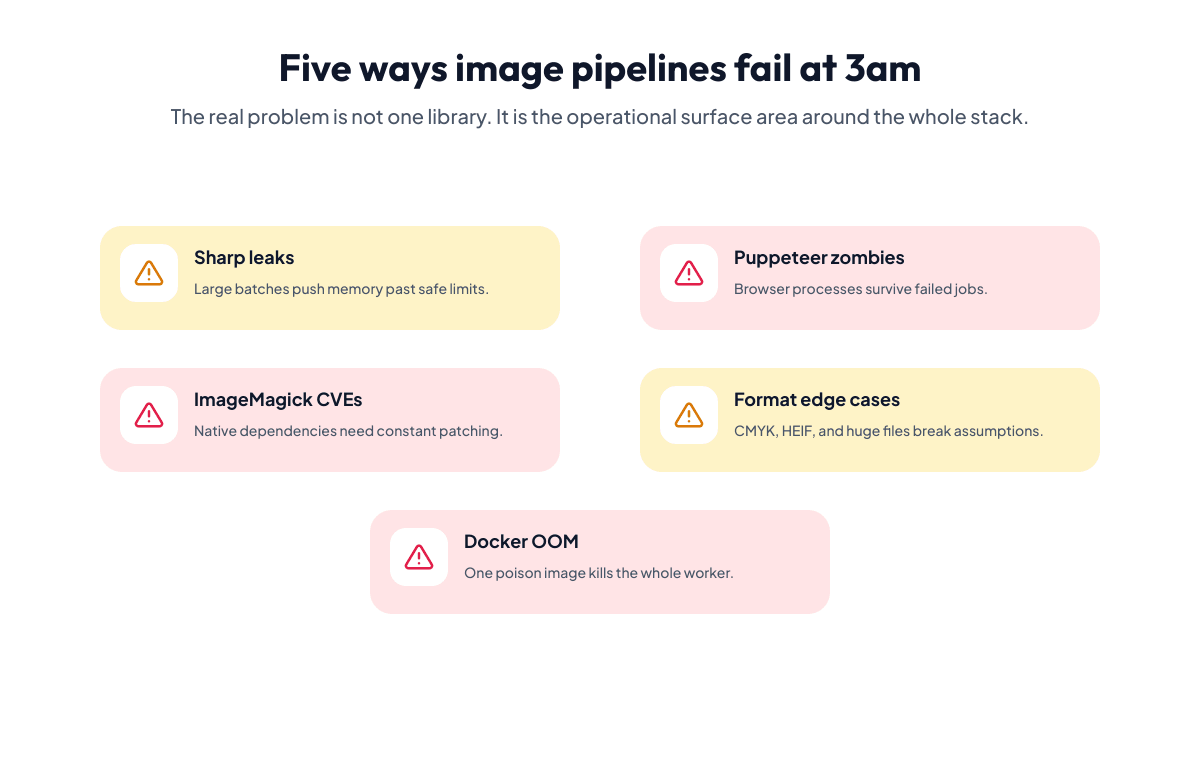

This post covers the five failure modes we’ve seen most often — in our own pipelines and in the ones our users migrated away from. For each one: the actual error, why it happens, and how an API-based approach eliminates the root cause entirely.

Failure 1: Sharp Memory Leaks

Sharp is the go-to image processing library for Node.js, and for good reason. It’s fast, well-documented, and built on libvips. But it has a well-known failure mode: memory leaks under sustained load.

The typical error looks like this:

FATAL ERROR: CALL_AND_RETRY_LAST Allocation failed - JavaScript heap out of memory

--- or in your monitoring ---

container memory: 3.8 GB / 4.0 GB limit

OOMKilled: trueThe root cause is usually one of three things:

-

Unreleased buffers. Sharp allocates native memory outside the V8 heap. If you’re processing images in a loop without proper cleanup — or if you’re holding references to intermediate buffers — the garbage collector never reclaims that memory. Node’s

--max-old-space-sizeflag doesn’t help because the leak is in native memory, not the JS heap. - Concurrent processing without backpressure. A queue dumps 200 images into your pipeline simultaneously. Each one allocates a decoded bitmap buffer. Even small images at 1,000 x 1,000 pixels consume ~4 MB decoded. Two hundred of them: 800 MB, just for the raw pixel data, before a single operation runs.

- Large input dimensions. A 10,000 x 10,000 pixel PNG decodes to ~400 MB. Your pipeline doesn’t check dimensions before processing, so one oversized upload from a user with a scanner blows past your container’s memory limit.

You can mitigate these with concurrency limits, explicit sharp.cache(false), dimension pre-checks, and careful buffer management. But that’s a lot of defensive code wrapped around what should be a straightforward resize-and-convert.

The API alternative: offload the processing entirely. The image never touches your server’s memory. You send a URL or base64 payload, specify the operations, and get the result back.

curl -X POST https://api.iterationlayer.com/image-transformation/v1/transform \

-H "Authorization: Bearer $API_KEY" \

-H "Content-Type: application/json" \

-d '{

"file": {

"type": "url",

"name": "product.png",

"url": "https://example.com/large-product-photo.png"

},

"operations": [

{

"type": "resize",

"width_in_px": 800,

"height_in_px": 600,

"fit": "inside"

},

{

"type": "convert",

"format": "webp",

"quality": 85

}

]

}'import { IterationLayer } from "iterationlayer";

const client = new IterationLayer({ apiKey: process.env.API_KEY });

const result = await client.transformImage({

file: {

type: "url",

name: "product.png",

url: "https://example.com/large-product-photo.png",

},

operations: [

{ type: "resize", width_in_px: 800, height_in_px: 600, fit: "inside" },

{ type: "convert", format: "webp", quality: 85 },

],

});from iterationlayer import IterationLayer

client = IterationLayer(api_key=os.environ["API_KEY"])

result = client.transform_image(

file={

"type": "url",

"name": "product.png",

"url": "https://example.com/large-product-photo.png",

},

operations=[

{"type": "resize", "width_in_px": 800, "height_in_px": 600, "fit": "inside"},

{"type": "convert", "format": "webp", "quality": 85},

],

)client := iterationlayer.NewClient(os.Getenv("API_KEY"))

result, err := client.TransformImage(iterationlayer.TransformImageRequest{

File: iterationlayer.NewFileFromURL(

"product.png",

"https://example.com/large-product-photo.png",

),

Operations: []iterationlayer.TransformOperation{

iterationlayer.NewResizeOperation(800, 600, "inside"),

iterationlayer.NewConvertOperation("webp"),

},

})Your server allocates zero memory for image decoding. The container can’t OOM from image processing because no image processing happens on your infrastructure.

Failure 2: Puppeteer Zombie Processes

If your pipeline generates images from HTML templates — social cards, certificates, OG images — you’re probably running Puppeteer or Playwright with a headless Chromium instance. The template approach makes sense: designers work in HTML/CSS, you inject data, screenshot the result.

Until the zombies show up.

Error: Navigation timeout of 30000 ms exceeded

Error: Protocol error (Target.createTarget): Target closed.

Error: Browser was disconnectedAnd in your process list:

USER PID %MEM COMMAND

node 142 0.0 /usr/bin/chromium --headless --disable-gpu

node 287 0.0 /usr/bin/chromium --headless --disable-gpu

node 401 0.0 /usr/bin/chromium --headless --disable-gpu

...

node 8891 0.0 /usr/bin/chromium --headless --disable-gpu

Fifty orphaned Chromium processes, each holding 50-100 MB of memory, none of them doing anything. The parent Node process crashed or timed out, but nobody called browser.close(). Or a page navigation hung, the timeout fired, but the browser process stayed alive.

The deeper problem: headless Chromium is a full browser engine. You’re running what is effectively Chrome in a Docker container to take screenshots. Each instance spins up a renderer process, a GPU process (even in headless mode), and a utility process. Under load, that’s hundreds of processes competing for memory and CPU.

Common failure triggers:

- Fonts not installed in the container. The template references “Inter” but the Docker image only has liberation fonts. Chromium substitutes a fallback, the layout shifts, and the screenshot looks broken. Not a crash, but a silent correctness bug that’s worse.

- External resource timeouts. The template loads a Google Font or an external image. The CDN is slow. Puppeteer’s navigation timeout fires. The page is half-rendered. You screenshot garbage.

-

Connection pool exhaustion. You reuse a single browser instance for concurrency, but Chromium’s page count hits its internal limit. New

browser.newPage()calls hang indefinitely.

The API alternative: Iteration Layer’s Image Generation API takes a layer-based definition — not HTML, but a structured JSON payload describing dimensions, text layers, image layers, colors, and positions. No browser engine. No font installation. No zombie processes.

curl -X POST https://api.iterationlayer.com/image-generation/v1/generate \

-H "Authorization: Bearer $API_KEY" \

-H "Content-Type: application/json" \

-d '{

"dimensions": {

"width_in_px": 1200,

"height_in_px": 630

},

"layers": [

{

"type": "solid-color",

"index": 0,

"hex_color": "#1a1a2e"

},

{

"type": "text",

"index": 1,

"text": "Why Image Pipelines Break at 3am",

"font_name": "Inter",

"font_size_in_px": 48,

"text_color": "#ffffff",

"position": { "x_in_px": 60, "y_in_px": 60 },

"dimensions": { "width_in_px": 1080, "height_in_px": 200 },

"font_weight": "bold"

},

{

"type": "text",

"index": 2,

"text": "iterationlayer.com",

"font_name": "Inter",

"font_size_in_px": 24,

"text_color": "#888888",

"position": { "x_in_px": 60, "y_in_px": 560 },

"dimensions": { "width_in_px": 400, "height_in_px": 40 }

}

],

"output_format": "png"

}'import { IterationLayer } from "iterationlayer";

const client = new IterationLayer({ apiKey: process.env.API_KEY });

const result = await client.generateImage({

dimensions: { width_in_px: 1200, height_in_px: 630 },

layers: [

{

type: "solid-color",

index: 0,

hex_color: "#1a1a2e",

},

{

type: "text",

index: 1,

text: "Why Image Pipelines Break at 3am",

font_name: "Inter",

font_size_in_px: 48,

text_color: "#ffffff",

position: { x_in_px: 60, y_in_px: 60 },

dimensions: { width_in_px: 1080, height_in_px: 200 },

font_weight: "bold",

},

{

type: "text",

index: 2,

text: "iterationlayer.com",

font_name: "Inter",

font_size_in_px: 24,

text_color: "#888888",

position: { x_in_px: 60, y_in_px: 560 },

dimensions: { width_in_px: 400, height_in_px: 40 },

},

],

output_format: "png",

});from iterationlayer import IterationLayer

client = IterationLayer(api_key=os.environ["API_KEY"])

result = client.generate_image(

dimensions={"width_in_px": 1200, "height_in_px": 630},

layers=[

{

"type": "solid-color",

"index": 0,

"hex_color": "#1a1a2e",

},

{

"type": "text",

"index": 1,

"text": "Why Image Pipelines Break at 3am",

"font_name": "Inter",

"font_size_in_px": 48,

"text_color": "#ffffff",

"position": {"x_in_px": 60, "y_in_px": 60},

"dimensions": {"width_in_px": 1080, "height_in_px": 200},

"font_weight": "bold",

},

{

"type": "text",

"index": 2,

"text": "iterationlayer.com",

"font_name": "Inter",

"font_size_in_px": 24,

"text_color": "#888888",

"position": {"x_in_px": 60, "y_in_px": 560},

"dimensions": {"width_in_px": 400, "height_in_px": 40},

},

],

output_format="png",

)client := iterationlayer.NewClient(os.Getenv("API_KEY"))

width1080 := 1080

height200 := 200

width400 := 400

height40 := 40

result, err := client.GenerateImage(iterationlayer.GenerateImageRequest{

Dimensions: iterationlayer.Dimensions{WidthInPx: 1200, HeightInPx: 630},

Layers: []iterationlayer.Layer{

iterationlayer.SolidColorLayer{

Type: "solid-color",

Index: 0,

HexColor: "#1a1a2e",

},

iterationlayer.TextLayer{

Type: "text",

Index: 1,

Text: "Why Image Pipelines Break at 3am",

FontName: "Inter",

FontSizeInPx: 48,

TextColor: "#ffffff",

Position: iterationlayer.Position{XInPx: 60, YInPx: 60},

Dimensions: iterationlayer.Dimensions{WidthInPx: 1080, HeightInPx: 200},

FontWeight: stringPtr("bold"),

},

iterationlayer.TextLayer{

Type: "text",

Index: 2,

Text: "iterationlayer.com",

FontName: "Inter",

FontSizeInPx: 24,

TextColor: "#888888",

Position: iterationlayer.Position{XInPx: 60, YInPx: 560},

Dimensions: iterationlayer.Dimensions{WidthInPx: 400, HeightInPx: 40},

},

},

OutputFormat: stringPtr("png"),

})No Chromium. No zombie processes. No font installation in your Dockerfile. The image is rendered server-side from a structured definition, and the result comes back as a binary buffer.

Failure 3: ImageMagick CVEs

ImageMagick has been the Swiss Army knife of image processing for decades. It handles hundreds of formats, runs everywhere, and has a feature for every conceivable operation. It also has a security track record that should make anyone running it in production nervous.

A few highlights from the CVE database:

-

CVE-2016-3714 (ImageTragick): A specially crafted image file could execute arbitrary commands on the server through ImageMagick’s delegate system. The

convertcommand could be tricked into running shell commands via filenames. This wasn’t a theoretical risk — it was actively exploited in the wild. -

CVE-2022-44268: A crafted PNG file could read arbitrary files from the server. An attacker uploads a PNG, your pipeline processes it with ImageMagick, and the output PNG contains the contents of

/etc/passwdembedded in its metadata. -

CVE-2023-34151: Heap buffer overflow in the

TIFFcoder. A malformed TIFF file triggers undefined behavior during processing.

The policy.xml file exists to restrict dangerous operations, but it’s easy to misconfigure. The default policy in many Linux distributions is permissive. And every new CVE means another round of “patch ImageMagick across all environments before someone exploits it.”

The architectural problem: ImageMagick processes untrusted input (user-uploaded files) in the same environment where your application code runs. Every vulnerability in ImageMagick is a vulnerability in your application.

The API alternative: move untrusted file processing off your infrastructure entirely. The file never touches your server. It’s processed on isolated infrastructure with no access to your application environment, your file system, or your network.

curl -X POST https://api.iterationlayer.com/image-transformation/v1/transform \

-H "Authorization: Bearer $API_KEY" \

-H "Content-Type: application/json" \

-d '{

"file": {

"type": "url",

"name": "user-upload.tiff",

"url": "https://example.com/uploads/user-upload.tiff"

},

"operations": [

{

"type": "resize",

"width_in_px": 1200,

"height_in_px": 1200,

"fit": "inside"

},

{

"type": "convert",

"format": "webp",

"quality": 90

}

]

}'import { IterationLayer } from "iterationlayer";

const client = new IterationLayer({ apiKey: process.env.API_KEY });

const result = await client.transformImage({

file: {

type: "url",

name: "user-upload.tiff",

url: "https://example.com/uploads/user-upload.tiff",

},

operations: [

{ type: "resize", width_in_px: 1200, height_in_px: 1200, fit: "inside" },

{ type: "convert", format: "webp", quality: 90 },

],

});from iterationlayer import IterationLayer

client = IterationLayer(api_key=os.environ["API_KEY"])

result = client.transform_image(

file={

"type": "url",

"name": "user-upload.tiff",

"url": "https://example.com/uploads/user-upload.tiff",

},

operations=[

{"type": "resize", "width_in_px": 1200, "height_in_px": 1200, "fit": "inside"},

{"type": "convert", "format": "webp", "quality": 90},

],

)client := iterationlayer.NewClient(os.Getenv("API_KEY"))

result, err := client.TransformImage(iterationlayer.TransformImageRequest{

File: iterationlayer.NewFileFromURL(

"user-upload.tiff",

"https://example.com/uploads/user-upload.tiff",

),

Operations: []iterationlayer.TransformOperation{

iterationlayer.NewResizeOperation(1200, 1200, "inside"),

iterationlayer.NewConvertOperation("webp"),

},

})Even if a malicious file exploits a vulnerability in image processing, it’s happening on isolated infrastructure — not on your servers, not in your network, not anywhere near your application data. That’s not a patch you need to apply. It’s a class of vulnerability you’ve eliminated from your attack surface.

Failure 4: CMYK, HEIF, and the Format Edge Cases

Your pipeline works perfectly with the JPEG and PNG files you tested with. Then production happens.

Error: Input file has CMYK color space - conversion to sRGB required

Error: Unsupported image format: image/heif

Error: Input buffer contains unsupported image format

Sharp: VipsJpeg: Premature end of input fileThe edge cases:

- CMYK color space. Professional print files and some stock photography use CMYK instead of RGB. Most web-focused image libraries assume RGB input. When a CMYK JPEG hits your Sharp pipeline, you get either a corrupted output with inverted colors or an outright crash. Handling it correctly requires ICC profile conversion, which means shipping color profiles in your Docker image and adding conversion logic before every operation.

-

HEIF/HEIC format. Every iPhone since 2017 defaults to HEIF for photos. But libvips (which Sharp wraps) needs to be compiled with HEIF support, which requires

libheif, which requires specific codecs. The default Sharp npm install doesn’t include HEIF support on all platforms. So the first time an iPhone user uploads a photo, your pipeline returns “unsupported format” — and you spend an afternoon debugging Docker build args. - Truncated files. Users upload files over spotty connections. The file appears valid (correct magic bytes, correct extension) but the data is truncated mid-stream. ImageMagick might output a half-decoded image. Sharp might throw “premature end of input.” Neither tells you upfront that the file is corrupt — the error surfaces mid-processing.

- Animated formats. A user uploads a GIF or animated WebP. Your resize operation processes only the first frame and returns a static image. No error, no warning — just silent data loss that takes weeks to notice.

- Embedded color profiles. An image has an embedded ICC profile that specifies Adobe RGB or ProPhoto RGB. Your pipeline ignores it and processes as sRGB. Colors shift subtly. Product photos look wrong. The support ticket reads “the colors on the website don’t match the original.”

Each of these is solvable. But each fix is another branch in your processing code, another dependency in your Docker image, another test case you need to maintain. The format matrix multiplies: CMYK HEIF, animated WebP with embedded profile, truncated TIFF with CMYK — and each combination needs its own handling.

The API alternative: let the service handle format detection, color space conversion, and edge case handling. You specify the output format and quality you want. The API figures out the input.

curl -X POST https://api.iterationlayer.com/image-transformation/v1/transform \

-H "Authorization: Bearer $API_KEY" \

-H "Content-Type: application/json" \

-d '{

"file": {

"type": "url",

"name": "iphone-photo.heic",

"url": "https://example.com/uploads/iphone-photo.heic"

},

"operations": [

{

"type": "smart_crop",

"width_in_px": 800,

"height_in_px": 800

},

{

"type": "convert",

"format": "webp",

"quality": 85

}

]

}'import { IterationLayer } from "iterationlayer";

const client = new IterationLayer({ apiKey: process.env.API_KEY });

const result = await client.transformImage({

file: {

type: "url",

name: "iphone-photo.heic",

url: "https://example.com/uploads/iphone-photo.heic",

},

operations: [

{ type: "smart_crop", width_in_px: 800, height_in_px: 800 },

{ type: "convert", format: "webp", quality: 85 },

],

});from iterationlayer import IterationLayer

client = IterationLayer(api_key=os.environ["API_KEY"])

result = client.transform_image(

file={

"type": "url",

"name": "iphone-photo.heic",

"url": "https://example.com/uploads/iphone-photo.heic",

},

operations=[

{"type": "smart_crop", "width_in_px": 800, "height_in_px": 800},

{"type": "convert", "format": "webp", "quality": 85},

],

)client := iterationlayer.NewClient(os.Getenv("API_KEY"))

result, err := client.TransformImage(iterationlayer.TransformImageRequest{

File: iterationlayer.NewFileFromURL(

"iphone-photo.heic",

"https://example.com/uploads/iphone-photo.heic",

),

Operations: []iterationlayer.TransformOperation{

iterationlayer.NewSmartCropOperation(800, 800),

iterationlayer.NewConvertOperation("webp"),

},

})HEIF input, CMYK input, truncated files, embedded color profiles — same API call. The service normalizes the input before applying your operations. You don’t need to know or care what color space the source file uses.

Failure 5: Docker OOM Kills

This one isn’t specific to any single tool. It’s the emergent failure mode of running image processing in containers with memory limits — which is to say, every production deployment.

State: OOMKilled

Reason: The container was killed because it exceeded its memory limit

Exit Code: 137The chain of events:

- Your container has a 2 GB memory limit (generous, you thought).

- Three concurrent image processing jobs start.

- Job 1: a 6,000 x 4,000 JPEG. Decoded, that’s ~96 MB of raw pixel data.

- Job 2: a 12,000 x 8,000 PNG from a scanner. Decoded: ~384 MB.

- Job 3: a batch of 40 thumbnails, processed sequentially but with buffer reuse issues.

- Peak memory: Node runtime (200 MB) + libvips cache (configurable, but defaults to generous) + decoded buffers (480 MB) + output buffers (varies) + V8 overhead.

- The kernel’s OOM killer fires. Container dies. All three jobs fail. The queue doesn’t know which images were partially processed. Your retry logic re-enqueues everything, including the 12,000 x 8,000 PNG that caused the problem.

- The container restarts. The same PNG comes back. The container dies again.

This is a retry storm caused by a poison pill — one input that will always exceed your memory limit. Without explicit dimension checks and per-job memory budgeting, the same file keeps killing your pipeline.

The standard mitigations:

-

Set

sharp.cache(false)or limit the libvips cache. - Add dimension limits before processing.

- Process one image at a time (kills throughput).

- Increase container memory (expensive, and just raises the ceiling for the next oversized input).

- Add a circuit breaker that skips files above a certain dimension.

All of these are operational band-aids. The fundamental problem is that image decoding allocates memory proportional to the pixel dimensions of the input, and you can’t predict or control what users upload.

The API alternative: the same pattern as the memory leak fix, but at the infrastructure level. Your container never decodes an image. It sends a reference (URL) and a list of operations. Memory usage on your side is constant regardless of input size — just the HTTP request and response payloads.

For images that need to end up under a specific file size (email attachments, marketplace listings with size limits), the API even handles the iterative quality reduction for you:

curl -X POST https://api.iterationlayer.com/image-transformation/v1/transform \

-H "Authorization: Bearer $API_KEY" \

-H "Content-Type: application/json" \

-d '{

"file": {

"type": "url",

"name": "hero-image.png",

"url": "https://example.com/uploads/hero-image.png"

},

"operations": [

{

"type": "resize",

"width_in_px": 1600,

"height_in_px": 900,

"fit": "cover"

},

{

"type": "compress_to_size",

"max_file_size_in_bytes": 500000

}

]

}'import { IterationLayer } from "iterationlayer";

const client = new IterationLayer({ apiKey: process.env.API_KEY });

const result = await client.transformImage({

file: {

type: "url",

name: "hero-image.png",

url: "https://example.com/uploads/hero-image.png",

},

operations: [

{ type: "resize", width_in_px: 1600, height_in_px: 900, fit: "cover" },

{ type: "compress_to_size", max_file_size_in_bytes: 500_000 },

],

});from iterationlayer import IterationLayer

client = IterationLayer(api_key=os.environ["API_KEY"])

result = client.transform_image(

file={

"type": "url",

"name": "hero-image.png",

"url": "https://example.com/uploads/hero-image.png",

},

operations=[

{"type": "resize", "width_in_px": 1600, "height_in_px": 900, "fit": "cover"},

{"type": "compress_to_size", "max_file_size_in_bytes": 500_000},

],

)client := iterationlayer.NewClient(os.Getenv("API_KEY"))

result, err := client.TransformImage(iterationlayer.TransformImageRequest{

File: iterationlayer.NewFileFromURL(

"hero-image.png",

"https://example.com/uploads/hero-image.png",

),

Operations: []iterationlayer.TransformOperation{

iterationlayer.NewResizeOperation(1600, 900, "cover"),

iterationlayer.NewCompressToSizeOperation(500_000),

},

})

The compress_to_size operation handles the iterative quality and dimension adjustment to hit your target file size. In a self-hosted pipeline, this requires a loop: compress, check size, reduce quality, compress again. With the API, it’s a single operation in the chain.

The Underlying Pattern

All five failure modes share the same root cause: you’re running complex, stateful, memory-intensive processing on the same infrastructure as your application code. Every image your pipeline touches is an untrusted input that could be any size, any format, any color space, potentially malicious, and potentially corrupt.

Managing that safely requires:

- Memory budgeting and backpressure

- Format detection and normalization

- Security patching and policy configuration

- Process lifecycle management

- Retry logic with poison pill detection

- Container resource tuning

That’s a lot of infrastructure for what amounts to “resize this image and give it back to me as WebP.”

When the DIY Approach Still Makes Sense

To be fair, self-hosted pipelines win in specific scenarios:

- Latency-critical paths. If you need sub-10ms image processing (like a real-time video filter), a local library with pre-loaded images will always beat a network round-trip.

- Massive scale with predictable inputs. If you process millions of images per day and they’re all the same format, same dimensions, same operations — the per-unit cost of an optimized self-hosted pipeline will be lower than any API.

- Offline or air-gapped environments. If your infrastructure can’t make outbound HTTP calls, an API isn’t an option.

For most workloads — user uploads, content pipelines, batch processing, on-demand transformations — the API approach eliminates the entire class of problems described above. Not by solving them better, but by moving them off your plate entirely.

Beyond Single Images: Composable Workflows

The real shift happens when image processing is just one step in a larger workflow. Convert a supplier PDF to markdown for review, extract product data from it, pull missing details from a public product page with Website Extraction, transform each product image to marketplace specifications, generate a listing card with the product details overlaid — all chained together with the same authentication, the same credit pool, and the same error format.

With a DIY approach, that’s three separate tools (a PDF parser, an image processor, a template renderer), three sets of dependencies, three Docker build steps, and custom glue code converting output formats between each step. One of those tools breaks, and you’re debugging at 3am.

With a composable API platform, each step is a single HTTP call. The output of one feeds into the next. No glue code, no format conversion, no multi-tool Docker image that takes 15 minutes to build.

Getting Started

If your pipeline is currently working and you’re not getting paged at 3am, you might not need to change anything. But if you recognize any of the failure modes above — if you’ve written retry logic around ImageMagick, debugged Sharp memory leaks, or cleaned up Puppeteer zombies — it might be worth trying the API approach for your next feature instead of extending the existing pipeline.

Start with the operation that causes the most pain. For most teams, that’s image transformation: Image Transformation docs. If you’re generating images from templates, check Image Generation. Both use the same SDK, same auth, same credit pool.

TypeScript and Python SDKs are available on npm and PyPI. The Go SDK is on GitHub. Or just use curl — the API is standard JSON over HTTPS.

Your on-call rotation will thank you.